The mean value provides a central expected value, and the standard deviation indicates how widely a series of data is distributed from its mean value. In particular, we wish to understand and calculate air temperature Shannon entropy of the environment and infer its meaning.Ī statistical analysis provides an overall picture of a distribution. Therefore, our primary motivation is to understand more fundamental aspects of the environment using temporal and spatially distributed data. A spatial distribution of the data could help us to understand variation in a specific environmental variable over a given region. Although this way of collecting data provides information on the distribution of temperature over time, it does not necessarily provide a good knowledge of its distribution over space. Over recent years technology has evolved rapidly, with enormous advances in electronics enabling the development of miniature sensing devices to collect environmental data-thereby creating the opportunity to utilize these devices in the environmental research, and for many environmental applications, a large number of these sensors are needed.Įnvironmental parameters, for example temperature, are measured over time at a weather station providing data for that location. An ideal system would contain a large number of tiny sensors distributed within an environmental sensing domain providing real-time spatio-temporal distributed environmental data. In any application, many particles (or entities) are required in order to estimate entropy. We have extended the application of the concept of entropy by applying it to environmental air temperature data coming from microsensors and observe correlation with environmental phenomena, such as high wind speed, heavy rainfall.

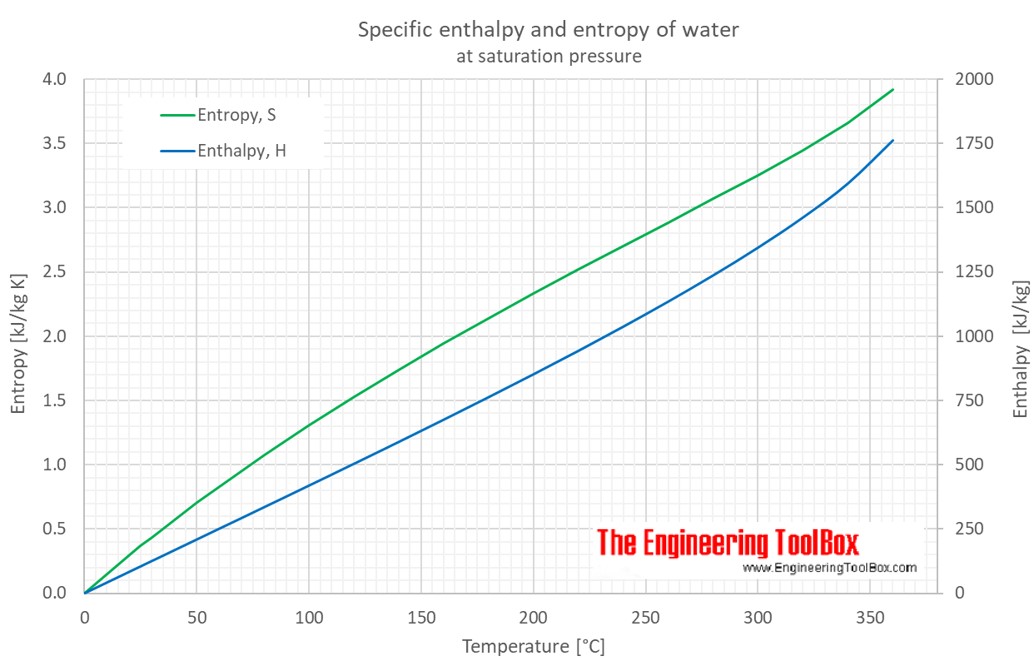

Considering that ecohydrological systems are too complex for specific variable-to-variable interactions to be represented individually, these authors used statistical concepts, including entropy, to study the aggregate behaviour of these systems. Ruddell and Kumar adopted an information-theoretical statistical method called “transfer entropy” for measuring the information flow between ecohydrological variables using time-series data. Such techniques become necessary, and feasible, when the number of species interactions is very large, as is the case in most plant-based communities. They regarded individual species-to-species interactions as random events and so were able to predict aggregate behaviour leading to various biodiversity outcomes using statistical mechanics concepts. Shipley et al. adopted a similar approach to predict the ways in which biodiversity varies within ecological communities, based on the particular traits of the plant species within these communities. This enables the aggregate behaviour of large numbers of molecules to be predicted using statistical concepts, such as entropy. The statistical mechanics formalism explains the macroscopic thermodynamic behaviour of a bulk system by treating the system as an assembly of microscopic interactions. Shannon entropy ) and in environmental science . entropy of black holes ), information theory (i.e. Entropy has been used in various areas of research, such as thermodynamics, cosmology (i.e. The entropy measure signifies changes in the microstates of a system and the distribution of entities therein. Since then there has been further development of the concept by Planck and Gibbs, ultimately leading to the development of statistical mechanics.

The concept of entropy from classical thermodynamics was further developed by Boltzmann who, in the 1870s, introduced a probabilistic definition based on microstates of a system. Entropy has been a subject of study within the research community since its inception in the 1850s by Rudolf Clausius , who coined the term entropy when he was analysing the Carnot cycle process.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed